In my previous articles I have explored the positive benefits of Robots in our society, ranging from farming, social care and more recently the use of robotic equipment in the fight against Covid-19. However, there is a darker more sinister side to the robotics industry and in this article I will explore some important ethical issues for developing autonomous robots for military use.

Robots have been used in the military for many years. In 2018 the UK government purchased 56 new Harris T7s bomb disposal robots. These robots are called unmanned ground vehicles (UGV) and are equipped with high-definition cameras, lightning-fast datalinks, an adjustable manipulation arm, and tough all-terrain treads, allowing them to neutralise a wide range of explosive threats.

Harris T7s bomb disposal robot

The use of ‘advanced haptic feedback’ allows operators to ‘feel’ their way through the intricate process of disarming from a safe distance. The use of this type of robot is very positive as it prevents harm to innocent civilians and protects soldiers when they are making explosives safe and from threats such as roadside bombs. The military also uses semi-autonomous drones. These are robots that are remote controlled, so there is a human in the loop who will fly them down, find a target and decide when to use lethal force.

However, several countries including US, China, Israel, South Korea, Russia, and the UK are developing autonomous weapon systems, where robot weapons can go out on their own, find their own targets, and kill them without any human supervision. Professor Noel Sharkey from The University of Sheffield states that fully autonomous robots raise important ethical issues such as how a machine will decide when lethal force is appropriate. This is concerning because it is unclear how this will be solved and some experts believe that this could lead to a robotic arms race if left unchallenged.

Several organisations have been campaigning against the use of robots as autonomous weapons. The Foundation for Responsible Robotics is organised by over 20 of the world’s leading tech scholars, writers and roboticists and is now growing rapidly with many new members and partners. The Responsible Robotics organisation states that:

“Robots are tools with no moral intelligence, which means it’s up to us – the humans behind the robots – to be accountable for the ethical developments that necessarily come with technological innovation. Addressing ethical issues in robotics and AI means proactively taking stock of the impact these innovations will have on societal values like safety, security, privacy, and well-being, rather than trying to contain the effects of robots after their introduction into society.”

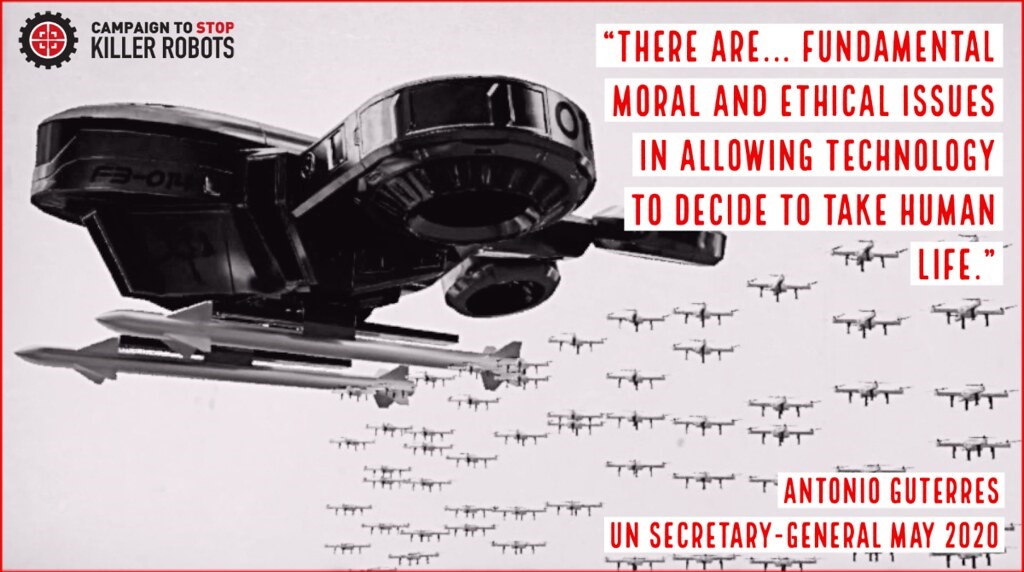

The Campaign to Stop Killer Robots is urging states to form an international treaty to ban the development, production, and use of fully autonomous weapons. In 2019 they brought their robot campaigner to the United Nations General Assembly in New York, calling for a ban on killer robots. Here are the highlights https://www.youtube.com/watch?v=XtvkJYkVRyg. To learn more about the threat of fully autonomous weapons watch this eerie video https://www.stopkillerrobots.org/learn/ which gives us an idea of what might happen if fully autonomous weapons replace troops.

Whilst robotic technology continues to be developed for all the right reasons in areas such as medicine, the care sector and agriculture, the use of robots as lethal weapons should be a huge concern for all of us. Many of these autonomous weapon systems are currently being developed and it’s our responsibility to make sure that humans stay in control.

What are your thoughts? Will the use of cyber robots actually save lives or will it make the prospect of armed conflict more likely? Perhaps you’re thinking of a career in the military or IT – is this what you expected it to be like? We’d love to hear from you so please get in touch below.

Sources:

Building a Future with Robots – The University of Sheffield. Future Learn MOOC

https://www.gov.uk/government/news/british-army-receives-pioneering-bomb-disposal-robots

https://en.wikipedia.org/wiki/Military_robot

https://responsiblerobotics.org/

https://en.wikipedia.org/wiki/Campaign_to_Stop_Killer_Robots

About Post Author

Rebekah @ Sheffield Girls'

Hi, I’m Rebekah and I’m in Year 11. I am a Digital Leader and a Google Certified Educator Level 1. I have represented the school in Robotics Challenge Days at Rolls Royce in Derby and at the National College for Advanced Transport & Infrastructure in Doncaster. I’ve been involved with many STEM activities and have been awarded the Bronze and Silver CREST awards. I also graduated as an Industrial Cadet participating in the Go4Set scheme. I enjoy playing the violin and I am part of the school ensemble. I enjoy hill walking, cycling and baking cupcakes 🙂

A another really interesting article Rebekah, which raises many interesting questions for the future. There use in supporting and preserving life is so important that it would be a shame if they start to get bad press for being used for the wrong reasons.

This is an interesting read Rebekah, but is also quite sobering. I fully agree that the people behind the robots should be accountable for any acts the robot executes, but I guess the problem arises when the robot malfunctions.

A great article Rebekah – very thought provoking. Every area of new technology always brings with it great hope for positive change but also the potential for unethical or destructive applications. I am sure we will see lots of regulations implemented in this area as the technology develops but lots of scaremongering in the press as well, I’d imagine.

A very interesting perspective on the use of robotics – slightly uncomfortable topic at times and if you delve deeper the implications can be quite frightening. Thank you again for such a brilliant insight into this topic.

Such an interesting article Rebekah! This topic is still such a big mystery but I didn’t know it was such a big issue until I read the article. It was super fascinating to learn about The Campaign to Stop Killer Robots! Well done 🙂