In July 2019, I was lucky enough to meet with Dr Alessandro Di Nuovo from Sheffield Hallam University who is an expert in the field of Artificial Intelligence and Robotics. Dr Di Nuovo is leading the Smart Interactive Technologies (SIT) Research group and conducting internationally renowned research. As part of this research he is looking at healthcare and well-being and robotics, specialising in human-robot interaction.

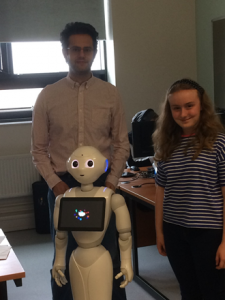

My interview with Dr Di Nuovo took place at his research lab in the University and I was able to meet and interact with several robots including Pepper, manufactured by Softbank Robotics. Read on to find out more about Robots and what Dr Di Nuovo has to say about them and their place in the future…

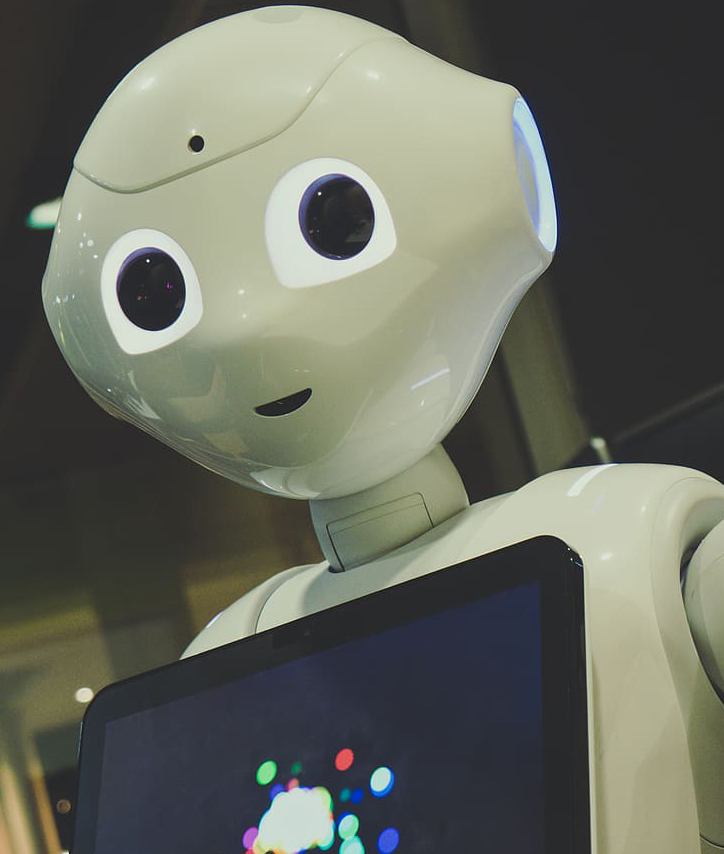

Humanoid because of the interactivity you get. It acts like an eye. It absorbs facial features and gestures and then interacts.

At school, I enjoyed Computer programming and Artificial Intelligence. Studying this in depth, I realised that these are fundamentally connected to Robotics. Indeed, we can program the AI to use the robotic body for improving the interaction while providing assistance and comfort to the elderly and children.

The work we are doing at Sheffield Children’s Hospital is to help children with anxiety. The robots are there to interact with the children and help them understand the procedures or activities that are going to be done to them.

One example is a robot having a plaster put on it – the child then knows

what is going to happen. Robots are also good at mimicking so they can do certain movements and have the children imitate them. This also has uses for physiotherapy.

Another use of robots is their interaction with the elderly. Social isolation amongst elderly people means that many do not see, let alone speak, to other people during the day. Radio and/or television are very passive, in that the elderly just listen. Robots can be used as social interactors – and through talking and physical movement they stimulate interaction. I have done several projects looking at the impact robots have with social isolation and the elderly.

Also robots are being used to pre-screen dementia in the elderly. In the absence of doctors or practitioners, robots can be used to screen signs of decreased neurological capacity. In these cases the tests are unbiased and gather data that then can be sifted to give either a decision; no further action required or a referral to a doctor for further investigation. There are further uses of medical diagnostic tools using robots. The imaging and detection generated by robots is used widely already in medicine.

At this stage in the interview we took a break so that I could try the dementia screening test. I found that the level of interaction was non-threatening and could see the advantage of using this diagnostic tool.

One concern is that robots are only as good as the human programmers that programme them. There is scope for false negatives, i.e. when something missed is an issue that needed further investigation. False positives can be rationalised just as when humans are used – and further investigations would then give a clear diagnosis.

Yes, only because they are programmed to have feelings and to show emotions. The emotions and feelings can be shown through the connection the person has with the robot. The robot can also show feelings and emotions by facial expressions, voice and gestures.

This newspaper article of mine [from the Independent newspaper] has attracted lots of interest. I also did an interview with BBC Radio. (my bit starts around 14 minutes in).

This is the journalistic report of one of our projects.

Do you agree with Dr Di Nuovo? Do you want to see more (or less) robots used in Education and Medicine? What do you think are the impacts of using robots in these industries?

Let us know your thoughts by leaving a comment below.

Thank you!

This article could not have been written without the enthusiasm and generous amount of time given by Dr Di Nuovo.

About Post Author

Rebekah @ Sheffield Girls'

Hi, I’m Rebekah and I’m in Year 11. I am a Digital Leader and a Google Certified Educator Level 1. I have represented the school in Robotics Challenge Days at Rolls Royce in Derby and at the National College for Advanced Transport & Infrastructure in Doncaster. I’ve been involved with many STEM activities and have been awarded the Bronze and Silver CREST awards. I also graduated as an Industrial Cadet participating in the Go4Set scheme. I enjoy playing the violin and I am part of the school ensemble. I enjoy hill walking, cycling and baking cupcakes 🙂

What a great article Rebekah. Your feature was fascinating to read and it sounds as though you must have thoroughly enjoyed your visit and interviewing Dr Di Nuovo.

Another brilliant insight into the robotic world. What a super opportunity to meet with an expert. Really enjoyed this Rebekah.

Fantastic article, Rebekah! What a wonderful opportunity to meet Dr Di Nuovo. He raises some very interesting points regarding the limitations of robots assuming human roles. Nevertheless, it will be fascinating to monitor advances in AI and robotics and their ability to assist within the fields of healthcare and medicine. Well done!

This is a fantastic interview! For better or for worse, the importance of robots is increasing, so I especially liked the question about their role in relation to humans. Great job!

A super article Rebekah; I found it fascinating. The links provided give further insight and information and it is a great idea to include them here. Dr Di Nuovo is obviously a leader in his field it must have been great to meet and learn from him. Thank you for sharing your experience.

I’ve thoroughly enjoyed reading your article, Rebekah. It’s fascinating to hear how robots are helping us in our everyday lives and it sounds like they’re having a really positive impact on the patients at the Children’s Hospital. Superbly written!

This is an absolutely amazing article Rebekah, it is so fascinating how robots are helping us in our lives

This is an interesting and thought-provoking article Rebekah. It must have been really exciting to meet such an eminent figure in the field of robotics research and development and your interview touches on some key ideas. Robots of course have been working in industrial automation for many years but Dr Di Nuovo’s specialism in human interactivity is such important work and has the potential to improve the lives of so many people.

Dr Di Nuovo makes the comment that robots are only as good as the humans who instruct them. This reminds me of the second of the Three Laws of Robots devised by the science fiction writer Isaac Asimov nearly 80 years ago in 1942, (https://en.wikipedia.org/wiki/Three_Laws_of_Robotics), which states that: ‘A robot must obey the orders given it by human beings.’ Dr Di Nuovo’s observation emphasises this. Artificial Intelligence has some way to go before it is independently able to mimic the human capability for fine, nuanced judgement.

I hope that your interest continues to grow and that you can look forward to a great career in robotics. Thank you for such an excellent article.

What a great article. Sounds like you had a great time at the University. Did you get to interact with any other robots? I think it will be really interesting to see where we are in 10 years in terms of the use of robots in day to day life.